mindspore.mint.nn.functional.gelu

- mindspore.mint.nn.functional.gelu(input, approximate='none')[source]

Gaussian Error Linear Units activation function.

GeLU is described in the paper Gaussian Error Linear Units (GELUs). And also please refer to BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

When approximate argument is none, GeLU is defined as follows:

\[GELU(x_i) = x_i*P(X < x_i),\]where \(P\) is the cumulative distribution function of the standard Gaussian distribution, \(x_i\) is the input element.

When approximate argument is tanh, GeLU is estimated with:

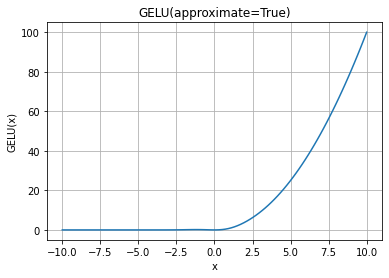

\[GELU(x_i) = 0.5 * x_i * (1 + \tanh(\sqrt(2 / \pi) * (x_i + 0.044715 * x_i^3)))\]GELU Activation Function Graph:

- Parameters

- Returns

Tensor, with the same type and shape as input.

- Raises

TypeError – If input is not a Tensor.

TypeError – If dtype of input is not bfloat16, float16, float32 or float64.

ValueError – If approximate value is neither none nor tanh.

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> from mindspore import Tensor, mint >>> x = Tensor([1.0, 2.0, 3.0], mindspore.float32) >>> result = mint.nn.functional.gelu(x) >>> print(result) [0.8413447 1.9544997 2.9959505]