mindspore.mint.nn.functional.elu

- mindspore.mint.nn.functional.elu(input, alpha=1.0)[source]

Exponential Linear Unit activation function.

Applies the exponential linear unit function element-wise. The activation function is defined as:

\[\begin{split}\text{ELU}(x)= \left\{ \begin{array}{align} \alpha(e^{x} - 1) & \text{if } x \le 0\\ x & \text{if } x \gt 0\\ \end{array}\right.\end{split}\]Where \(x\) is the element of input Tensor input, \(\alpha\) is param alpha, it determines the smoothness of ELU.

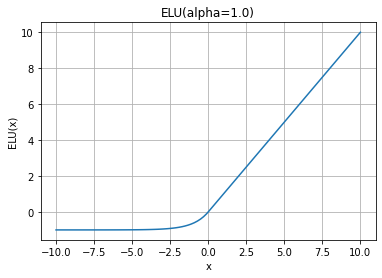

ELU function graph:

- Parameters

- Returns

Tensor, has the same shape and data type as input.

- Raises

TypeError – If alpha is not a float.

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> import numpy as np >>> from mindspore import Tensor, mint >>> x = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> output = mint.nn.functional.elu(x) >>> print(output) [[-0.63212055 4. -0.99966455] [ 2. -0.99326205 9. ]]