mindspore.mint.nn.PReLU

- class mindspore.mint.nn.PReLU(num_parameters=1, init=0.25, dtype=None)[source]

Applies PReLU activation function element-wise.

PReLU is defined as:

\[PReLU(x_i)= \max(0, x_i) + w * \min(0, x_i),\]where \(x_i\) is an element of an channel of the input.

Here \(w\) is a learnable parameter with a default initial value 0.25. Parameter \(w\) has dimensionality of the argument channel. If called without argument channel, a single parameter \(w\) will be shared across all channels.

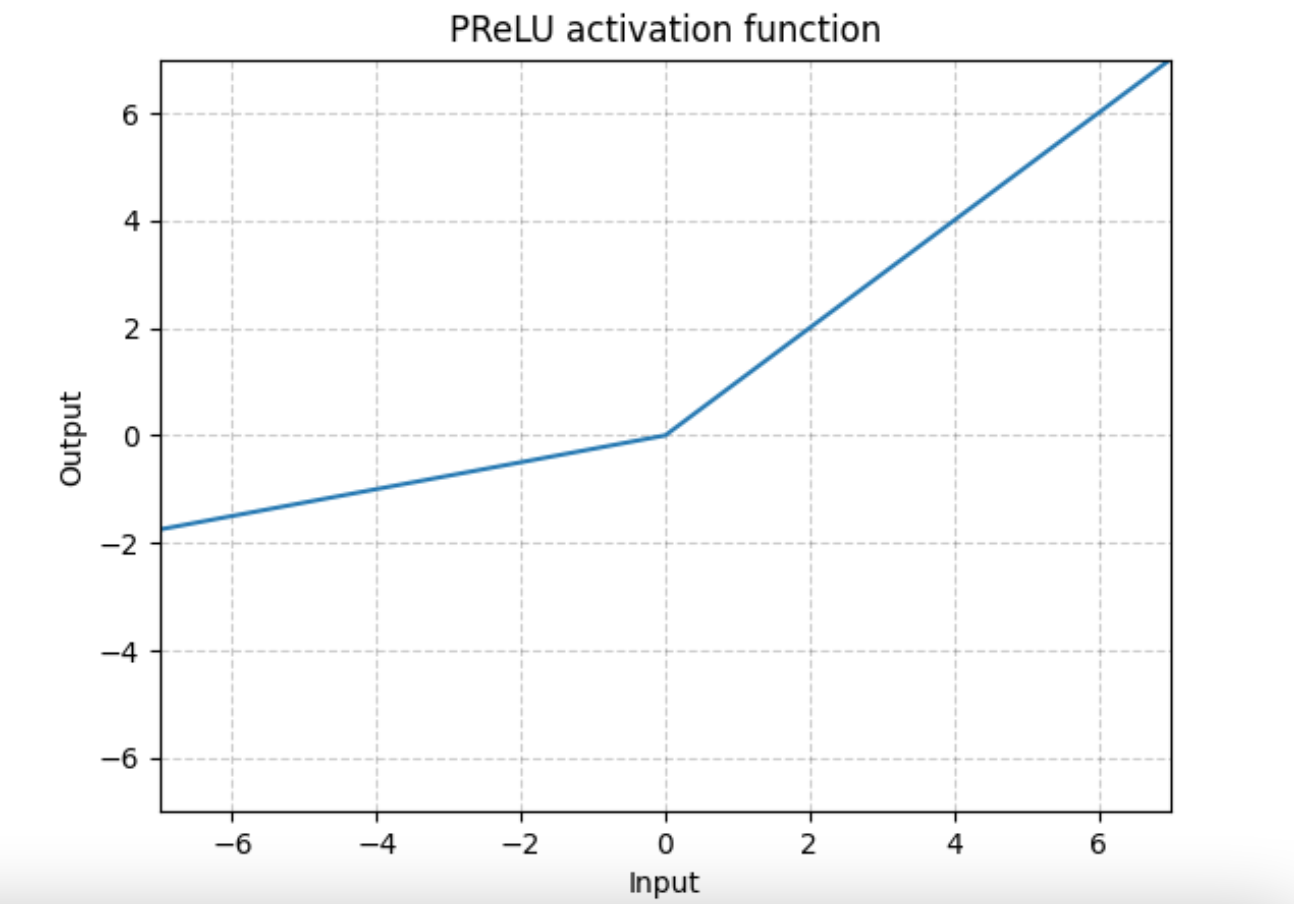

PReLU Activation Function Graph:

Note

Channel dim is the 2nd dim of input. When input has dims < 2, then there is no channel dim and the number of channels = 1.

- Parameters

num_parameters (int) – number of w to learn. Although it takes an int as input, there is only two legitimate values: 1, or the number of channels at Tensor input. Default:

1.init (float) – the initial value of w. Default:

0.25.dtype (mindspore.dtype, optional) – the type of w. Default:

None. Supported data type is {float16, float32, bfloat16}.

- Inputs:

input (Tensor) - The input of PReLU.

- Outputs:

Tensor, with the same dtype and shape as the input.

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> from mindspore import Tensor, mint >>> import numpy as np >>> x = Tensor(np.array([[[[0.1, 0.6], [0.9, 0.9]]]]), mindspore.float32) >>> prelu = mint.nn.PReLU() >>> output = prelu(x) >>> print(output) [[[[0.1 0.6] [0.9 0.9]]]]