mindspore.nn.CELU

- class mindspore.nn.CELU(alpha=1.0)[source]

Continuously differentiable exponential linear units activation function.

Applies the continuously differentiable exponential linear units function element-wise.

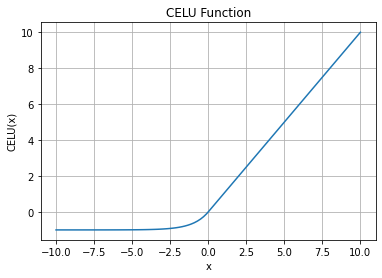

\[\text{CELU}(x) = \max(0,x) + \min(0, \alpha * (\exp(x/\alpha) - 1))\]CELU Activation Function Graph:

- Parameters

alpha (float) – The \(\alpha\) value for the Celu formulation. Default:

1.0.

- Inputs:

x (Tensor) - The input of CELU. The required dtype is float16 or float32. The shape is \((N,*)\) where \(*\) means, any number of additional dimensions.

- Outputs:

Tensor, with the same type and shape as the x.

- Raises

TypeError – If alpha is not a float.

ValueError – If alpha has the value of 0.

TypeError – If x is not a Tensor.

TypeError – If the dtype of ‘input_x’ is neither float16 nor float32.

- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> from mindspore import Tensor, nn >>> import numpy as np >>> x = Tensor(np.array([-2.0, -1.0, 1.0, 2.0]), mindspore.float32) >>> celu = nn.CELU() >>> output = celu(x) >>> print(output) [-0.86466473 -0.63212055 1. 2. ]