mindspore.nn.ReLU

- class mindspore.nn.ReLU[source]

Rectified Linear Unit activation function.

\[\text{ReLU}(x) = (x)^+ = \max(0, x),\]It returns element-wise \(\max(0, x)\). Specially, the neurons with the negative output will be suppressed and the active neurons will stay the same.

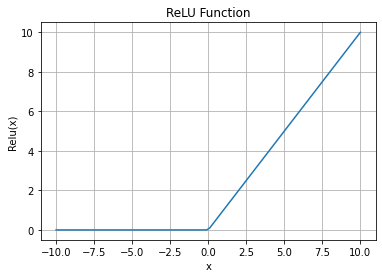

ReLU Activation Function Graph:

- Inputs:

x (Tensor) - The input of ReLU is a Tensor of any dimension. The data type is number .

- Outputs:

Tensor, with the same type and shape as the x.

- Raises

TypeError – If dtype of x is not a number.

- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> from mindspore import Tensor, nn >>> import numpy as np >>> x = Tensor(np.array([-1, 2, -3, 2, -1]), mindspore.float16) >>> relu = nn.ReLU() >>> output = relu(x) >>> print(output) [0. 2. 0. 2. 0.]