mindspore.nn.FastGelu

- class mindspore.nn.FastGelu[source]

Fast Gaussian error linear unit activation function.

Applies FastGelu function to each element of the input. The input is a Tensor with any valid shape.

FastGelu is defined as:

\[FastGelu(x_i) = \frac {x_i} {1 + \exp(-1.702 * \left| x_i \right|)} * \exp(0.851 * (x_i - \left| x_i \right|))\]where \(x_i\) is the element of the input.

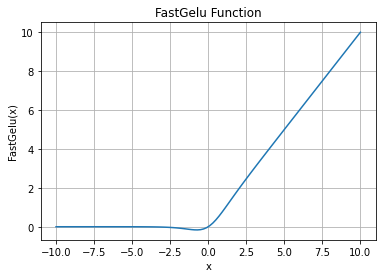

FastGelu Activation Function Graph:

- Inputs:

x (Tensor) - The input of FastGelu with data type of float16 or float32. The shape is \((N,*)\) where \(*\) means, any number of additional dimensions.

- Outputs:

Tensor, with the same type and shape as the x.

- Raises

TypeError – If dtype of x is neither float16 nor float32.

- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> from mindspore import Tensor, nn >>> import numpy as np >>> x = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> fast_gelu = nn.FastGelu() >>> output = fast_gelu(x) >>> print(output) [[-1.5418735e-01 3.9921875e+00 -9.7473649e-06] [ 1.9375000e+00 -1.0052517e-03 8.9824219e+00]]