mindspore.nn.SeLU

- class mindspore.nn.SeLU[source]

Applies activation function SeLU (Scaled exponential Linear Unit) element-wise.

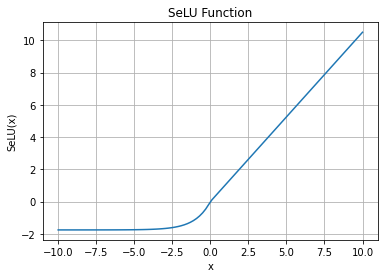

SeLU Activation Function Graph:

Refer to

mindspore.ops.selu()for more details.- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> from mindspore import Tensor, nn >>> import numpy as np >>> input_x = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> selu = nn.SeLU() >>> output = selu(input_x) >>> print(output) [[-1.1113307 4.202804 -1.7575096] [ 2.101402 -1.7462534 9.456309 ]]