mindspore.nn.HSigmoid

- class mindspore.nn.HSigmoid[source]

Applies Hard sigmoid activation function element-wise.

Hard sigmoid is defined as:

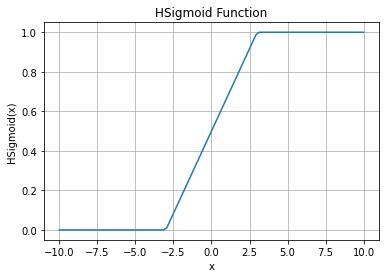

\[\text{hsigmoid}(x_{i}) = \max(0, \min(1, \frac{x_{i} + 3}{6})),\]HSigmoid Activation Function Graph:

- Inputs:

input_x (Tensor) - The input of HSigmoid. Tensor of any dimension.

- Outputs:

Tensor, with the same type and shape as the input_x.

- Raises

TypeError – If input_x is not a Tensor.

- Supported Platforms:

AscendGPUCPU

Examples

>>> import mindspore >>> from mindspore import Tensor, nn >>> import numpy as np >>> x = Tensor(np.array([-1, -2, 0, 2, 1]), mindspore.float16) >>> hsigmoid = nn.HSigmoid() >>> result = hsigmoid(x) >>> print(result) [0.3333 0.1666 0.5 0.8335 0.6665]