mindspore.mint.nn.functional.relu6

- mindspore.mint.nn.functional.relu6(input, inplace=False)[source]

Computes ReLU (Rectified Linear Unit) upper bounded by 6 of input tensors element-wise.

\[\text{ReLU6}(input) = \min(\max(0,input), 6)\]It returns \(\min(\max(0,input), 6)\) element-wise.

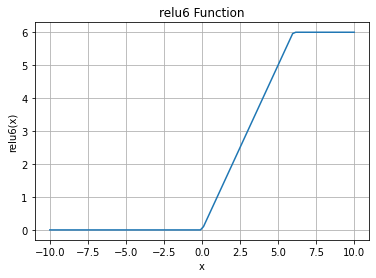

ReLU6 Activation Function Graph:

- Parameters

- Returns

Tensor, with the same dtype and shape as the input.

- Raises

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> import numpy as np >>> from mindspore import Tensor, mint >>> x = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> result = mint.nn.functional.relu6(x) >>> print(result) [[0. 4. 0.] [2. 0. 6.]]