mindspore.mint.nn.functional.elu_

- mindspore.mint.nn.functional.elu_(input, alpha=1.0)[source]

Exponential Linear Unit activation function

Applies the exponential linear unit function inplace element-wise. The activation function is defined as:

\[ELU_{i} = \begin{cases} x_i, &\text{if } x_i \geq 0; \cr \alpha * (\exp(x_i) - 1), &\text{otherwise.} \end{cases}\]where \(x_i\) represents the element of the input and \(\alpha\) represents the alpha parameter, and alpha represents the smoothness of the ELU.

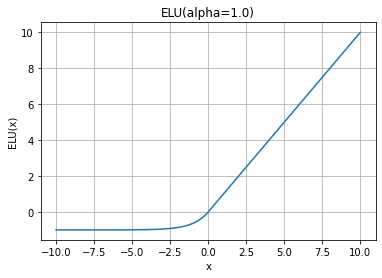

ELU Activation Function Graph:

Warning

This is an experimental API that is subject to change or deletion.

- Parameters

- Returns

Tensor, with the same shape and type as the input.

- Raises

RuntimeError – If the dtype of input is not float16, float32 or bfloat16.

TypeError – If the dtype of alpha is not float.

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> from mindspore import Tensor, mint >>> import numpy as np >>> input = Tensor(np.array([-1, -2, 0, 2, 1]), mindspore.float32) >>> mint.nn.functional.elu_(input) >>> print(input) [-0.63212055 -0.86466473 0. 2. 1.]