mindspore.mint.nn.GELU

- class mindspore.mint.nn.GELU(approximate='none')[source]

Activation function GELU (Gaussian Error Linear Unit).

For more details, refer to the paper Gaussian Error Linear Units (GELUs), or the paper BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

Refer to

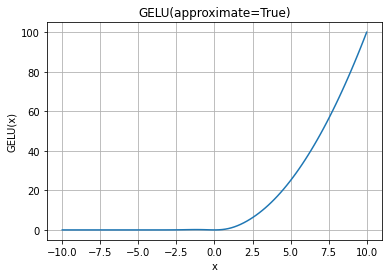

mindspore.mint.nn.functional.gelu()for more details.GELU Activation Function Graph:

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> import numpy as np >>> input = mindspore.tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> gelu = mindspore.mint.nn.GELU() >>> output = gelu(input) >>> print(output) [[-1.58655241e-01 3.99987316e+00 -0.00000000e+00] [ 1.95449972e+00 -1.41860323e-06 9.0000000e+00]] >>> gelu = mindspore.mint.nn.GELU(approximate="tanh") >>> output = gelu(input) >>> print(output) [[-1.58808023e-01 3.99992990e+00 -3.10779147e-21] [ 1.95459759e+00 -2.29180174e-07 9.0000000e+00]]