Model Inference (Java)

Overview

After the model is converted into a .ms model by using the MindSpore Lite model conversion tool, the inference process can be performed in Runtime. For details, see Converting Models for Inference. This tutorial describes how to use the Java API to perform inference.

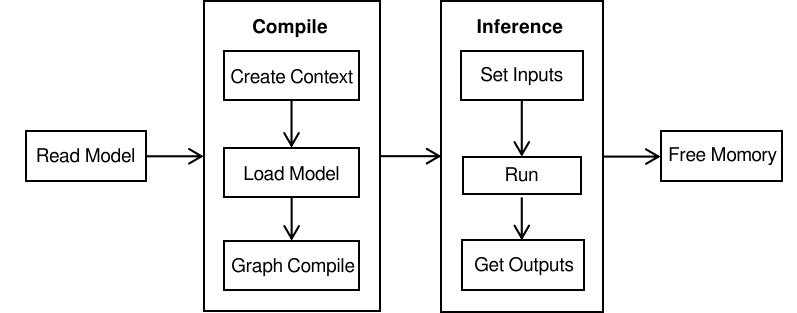

If MindSpore Lite is used in an Android project, you can use C++ API or Java API to run the inference framework. Compared with C++ APIs, Java APIs can be directly called in the Java class. Users do not need to implement the code at the JNI layer, which is more convenient. To run the MindSpore Lite inference framework, perform the following steps:

Load the model(optional): Read the

.msmodel converted by the model conversion tool introduced in Converting Models for Inference from the file system.Create a configuration context: Create a configuration context MSContext to save some basic configuration parameters required by a model to guide graph build and execution, including

deviceType(device type),threadNum(number of threads),cpuBindMode(CPU core binding mode), andenable_float16(whether to preferentially use the float16 operator).Build a graph: Before building a graph, the build API of Model needs to be called to build the graph, including graph partition and operator selection and scheduling. This takes a long time. Therefore, it is recommended that with Model created each time, one graph be built. In this case, the inference will be performed for multiple times.

Input data: Before the graph is performed, data needs to be filled in to the

Input Tensor.Perform inference: Use the Model of the predict to perform model inference.

Obtain the output: After the graph execution is complete, you can obtain the inference result by

outputting the tensor.Release the memory: If the MindSpore Lite inference framework is not required, release the created Model.

For details about the calling process of MindSpore Lite inference, see Experience Java Simple Inference Demo.

Referencing the MindSpore Lite Java Library

Linux X86 Project Referencing the JAR Library

When using Maven as the build tool, you can copy mindspore-lite-java.jar to the lib directory in the root directory and add the dependency of the JAR package to pom.xml.

<dependencies>

<dependency>

<groupId>com.mindspore.lite</groupId>

<artifactId>mindspore-lite-java</artifactId>

<version>1.0</version>

<scope>system</scope>

<systemPath>${project.basedir}/lib/mindspore-lite-java.jar</systemPath>

</dependency>

</dependencies>

Android Projects Referencing the AAR Library

When Gradle is used as the build tool, move the mindspore-lite-{version}.aar file to the libs directory of the target module, and then add the local reference directory to repositories of build.gradle of the target module, add the AAR dependency to dependencies as follows:

Note that mindspore-lite-{version} is the AAR file name. Replace {version} with the corresponding version information.

repositories {

flatDir {

dirs 'libs'

}

}

dependencies {

implementation fileTree(dir: "libs", include: ['*.aar'])

}

Loading a Model

Before performing model inference, MindSpore Lite needs to load the .ms model converted by the model conversion tool from the file system and parse the model.

The following sample code reads the model file from specified file path.

// Load the .ms model.

MappedByteBuffer byteBuffer = null;

try {

fc = new RandomAccessFile(fileName, "r").getChannel();

byteBuffer = fc.map(FileChannel.MapMode.READ_ONLY, 0, fc.size()).load();

} catch (IOException e) {

e.printStackTrace();

}

Creating a Configuration Context

Create the configuration context MSContext to save some basic configuration parameters required by the session to guide graph build and execution. Configure the number of threads, thread affinity and whether to enable heterogeneous parallel inference via the init interface. MindSpore Lite has a built-in thread pool shared by processes. During inference, threadNum is used to specify the maximum number of threads in the thread pool. The default value is 2.

MindSpore Lite supports heterogeneous inference. The preferred backend for inference is specified by deviceType of AddDeviceInfo. Currently, CPU, GPU and NPU are supported. During graph build, operator selection and scheduling are performed based on the preferred backend.If the backend supports Float16, you can use the Float16 operator first by setting isEnableFloat16 to true. If it is an NPU backend, you can also set the NPU frequency value. The default frequency value is 3, and can be set to 1 (low power consumption), 2 (balanced), 3 (high performance), and 4 (extreme performance).

Configuring the CPU Backend

If the backend to be performed is a CPU, you need to configure addDeviceInfo after MSContext is inited. In addition, the CPU supports the setting of the core binding mode and whether to preferentially use the float16 operator.

The following sample code from MainActivity.java demonstrates how to create a CPU backend, set the CPU core binding mode to large-core priority, and enable float16 inference:

MSContext context = new MSContext();

context.init(2, CpuBindMode.HIGHER_CPU);

context.addDeviceInfo(DeviceType.DT_CPU, true);

Float16 takes effect only when the CPU is of the ARM v8.2 architecture. Other models and x86 platforms that are not supported are automatically rolled back to float32.

Configuring the GPU Backend

If the backend to be performed is heterogeneous inference based on CPU and GPU, you need to add successively GPUDeviceInfo and CPUDeviceInfo when call addDeviceInfo, GPU inference will be used first after configuration. In addition, if enable_float16 is set to true, both the GPU and CPU preferentially use the float16 operator.

The following sample code demonstrates how to create the CPU and GPU heterogeneous inference backend and how to enable float16 inference for the GPU.

MSContext context = new MSContext();

context.init(2, CpuBindMode.MID_CPU);

context.addDeviceInfo(DeviceType.DT_GPU, true);

context.addDeviceInfo(DeviceType.DT_CPU, true);

Currently, the GPU can run only on Android mobile devices. Therefore, only the

AARlibrary can be run.

Configuring the NPU Backend

If the backend to be performed is heterogeneous inference based on CPU and GPU, you need to add successively KirinNPUDeviceInfo and CPUDeviceInfo when call addDeviceInfo, NPU inference will be used first after configuration. In addition, if enable_float16 is set to true, both the NPU and CPU preferentially use the float16 operator.

The following sample code demonstrates how to create the CPU and NPU heterogeneous inference backend and how to enable float16 inference for the NPU.KirinNPUDeviceInfo frequency can be set by NPUFrequency.

MSContext context = new MSContext();

context.init(2, CpuBindMode.MID_CPU);

context.addDeviceInfo(DeviceType.DT_NPU, true, 3);

context.addDeviceInfo(DeviceType.DT_CPU, true);

Loading and Compiling a Model

When using MindSpore Lite to perform inference, Model is the main entrance of inference, and the model can be realized through Model Loading, model compilation and model execution. Using the MSContext created in the previous step, call the composite build interface to implement model loading and model compilation.

The following sample code demonstrates how to load and compile a model.

Model model = new Model();

boolean ret = model.build(filePath, ModelType.MT_MINDIR, msContext);

Inputting Data

MindSpore Lite Java APIs provide the getInputsByTensorName and getInputs methods to obtain the input tensor. Both the byte[] and ByteBuffer data types are supported. You can set the data of the input tensor by calling setData.

Use the getInputsByTensorName method to obtain the tensor connected to the input node from the model input tensor based on the name of the model input tensor. The following sample code from MainActivity.java demonstrates how to call the

getInputByTensorNamefunction to obtain the input tensor and fill in data.MSTensor inputTensor = model.getInputByTensorName("2031_2030_1_construct_wrapper:x"); // Set Input Data. inputTensor.setData(inputData);

Use the getInputs method to directly obtain the vectors of all model input tensors. The following sample code from MainActivity.java demonstrates how to call

getInputsto obtain the input tensors and fill in the data.List<MSTensor> inputs = model.getInputs(); MSTensor inputTensor = inputs.get(0); // Set Input Data. inputTensor.setData(inputData);

Executing Inference

After MindSpore Lite builds a model, it can call the predict function of Model to perform model inference.

The following sample code demonstrates how to call predict to perform inference.

// Run graph to infer results.

boolean ret = model.predict();

Obtaining the Output

After performing inference, MindSpore Lite can output a tensor to obtain the inference result. MindSpore Lite provides three methods to obtain the output MSTensor of a model and supports the getByteData, getFloatData, getIntData and getLongData methods to obtain the output data.

Use the getOutputs method to directly obtain the list of all model output MSTensor. The following sample code from MainActivity.java demonstrates how to call

getOutputsto obtain the output tensor.List<MSTensor> outTensors = model.getOutputs();

Use the getOutputsByNodeName method to obtain the vector of the tensor connected to the model output MSTensor based on the name of the model output node. The following sample code from MainActivity.java demonstrates how to call

getOutputByTensorNameto obtain the output tensor.MSTensor outTensor = model.getOutputsByNodeName("Default/head-MobileNetV2Head/Softmax-op204"); // Apply infer results. ...

Use the getOutputByTensorName method to obtain the model output MSTensor based on the name of the model output tensor. The following sample code from MainActivity.java demonstrates how to call

getOutputByTensorNameto obtain the output tensor.MSTensor outTensor = model.getOutputByTensorName("Default/head-MobileNetV2Head/Softmax-op204"); // Apply infer results. ...

Releasing the Memory

If the MindSpore Lite inference framework is not required, you need to release the created Model. The following sample code from MainActivity.java demonstrates how to release the memory before the program ends.

model.free();

Advanced Usage

Resizing the Input Dimension

When using MindSpore Lite for inference, if you need to resize the input shape, you can call the resize API of Model to reset the shape of the input tensor after building a model.

Some networks do not support variable dimensions. As a result, an error message is displayed and the model exits unexpectedly. For example, the model contains the MatMul operator, one input tensor of the MatMul operator is the weight, and the other input tensor is the input. If a variable dimension API is called, the input tensor does not match the shape of the weight tensor. As a result, the inference fails.

The following sample code from MainActivity.java demonstrates how to perform resize on the input tensor of MindSpore Lite:

List<MSTensor> inputs = session.getInputs();

int[][] dims = {{1, 300, 300, 3}};

bool ret = model.resize(inputs, dims);

Viewing Logs

If an exception occurs during inference, you can view logs to locate the fault. For the Android platform, use the Logcat command line to view the MindSpore Lite inference log information and use MS_LITE to filter the log information.

logcat -s "MS_LITE"

Obtaining the Version Number

MindSpore Lite provides the Version method to obtain the version number, which is included in the com.mindspore.lite.Version header file. You can call this method to obtain the version number of MindSpore Lite.

The following sample code from MainActivity.java demonstrates how to obtain the version number of MindSpore Lite:

import com.mindspore.lite.config.Version;

String version = Version.version();