mindspore.mint.nn.functional.softshrink

- mindspore.mint.nn.functional.softshrink(input, lambd=0.5)[source]

Soft Shrink activation function. Calculates the output according to the input elements.

The formula is defined as follows:

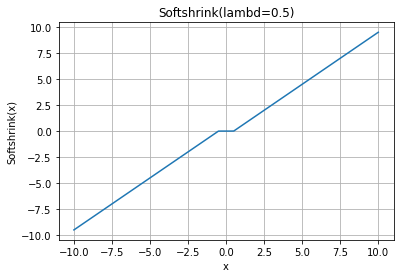

\[\begin{split}\text{SoftShrink}(x) = \begin{cases} x - \lambda, & \text{ if } x > \lambda \\ x + \lambda, & \text{ if } x < -\lambda \\ 0, & \text{ otherwise } \end{cases}\end{split}\]SoftShrink Activation Function Graph:

- Parameters

input (Tensor) –

The input of Soft Shrink. Supported dtypes:

Ascend: float16, float32, bfloat16.

lambd (number, optional) – The threshold \(\lambda\) defined by the Soft Shrink formula. It should be greater than or equal to 0, default:

0.5.

- Returns

Tensor, has the same data type and shape as the input input.

- Raises

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> from mindspore import Tensor >>> from mindspore import mint >>> import numpy as np >>> x = Tensor(np.array([[ 0.5297, 0.7871, 1.1754], [ 0.7836, 0.6218, -1.1542]]), mindspore.float32) >>> output = mint.nn.functional.softshrink(x) >>> print(output) [[ 0.02979 0.287 0.676 ] [ 0.2837 0.1216 -0.6543 ]]