mindspore.mint.nn.functional.selu

- mindspore.mint.nn.functional.selu(input)[source]

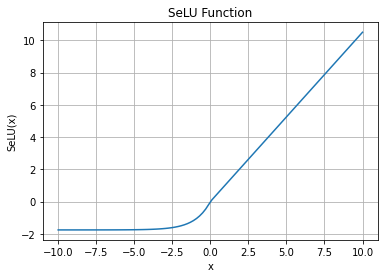

Activation function SELU (Scaled exponential Linear Unit).

The activation function is defined as:

\[E_{i} = scale * \begin{cases} x_{i}, &\text{if } x_{i} \geq 0; \cr \text{alpha} * (\exp(x_i) - 1), &\text{otherwise.} \end{cases}\]where \(alpha\) and \(scale\) are pre-defined constants(\(alpha=1.67326324\) and \(scale=1.05070098\)).

See more details in Self-Normalizing Neural Networks.

SELU Activation Function Graph:

- Parameters

input (Tensor) – Tensor of any dimension. The data type is float16, float32, bfloat16.

- Returns

Tensor, with the same type and shape as the input.

- Raises

TypeError – If dtype of input is not float16, float32, bfloat16.

- Supported Platforms:

Ascend

Examples

>>> import mindspore >>> from mindspore import Tensor, mint >>> import numpy as np >>> input = Tensor(np.array([[-1.0, 4.0, -8.0], [2.0, -5.0, 9.0]]), mindspore.float32) >>> output = mint.nn.functional.selu(input) >>> print(output) [[-1.1113307 4.202804 -1.7575096] [ 2.101402 -1.7462534 9.456309 ]]